Traditional document review is too slow, too manual, and too hard to scale. AlphaRoom was built to preserve expert judgment while shifting the repetitive parts of analysis into an AI-assisted workflow.

Context

Document review in M&A and related diligence work is expensive, repetitive, and difficult to scale. Experienced analysts spend a huge amount of time reading, organizing, comparing, and summarizing sensitive material before they can even get to the work that actually matters.

Most AI tools in this space focus on summarization or extraction, but they usually break trust in one of two ways: they either expose sensitive information to the wrong systems, or they flatten expert analysis into generic output that teams cannot really work with.

AlphaRoom started as a way to reframe the problem. Instead of asking AI to replace expert review, the product was designed to support how strong analysts already think and work, then make that process faster, more structured, and easier to share.

Problem

- Teams were drowning in large document sets and spending too much time reading before they could analyze.

- Review quality varied by reviewer, document set, and domain familiarity.

- Sensitive information made generic cloud-AI workflows difficult to trust.

- Findings were hard to organize, compare, and share across collaborators.

- There was no coherent system from ingestion to insight to team-level review.

Constraints

- Sensitive financial and legal information needed stronger protection than a typical AI upload flow.

- The system had to support human oversight rather than hide judgment behind automated output.

- Different review styles and document types meant the workflow needed to stay flexible.

- The product had to be useful early, not after a long enterprise integration cycle.

Research Findings

The most important early insight was that the real bottleneck was not just reading speed. It was the lack of structure around how reviewers interpreted, captured, and shared what they found.

Three findings shaped the product:

- Reviewers were spending most of their time parsing and organizing rather than making decisions.

- Privacy concerns were strong enough to block adoption of otherwise promising AI approaches.

- Teams needed a shared workspace for findings, not just better extraction.

We mapped the end-to-end review lifecycle to find where AI could meaningfully support the work without taking control away from the analyst.

Key Decisions

1. Put privacy at the center of the workflow

Instead of treating privacy as a legal or infrastructure concern that sat outside the UX, I made it part of the product model. Sensitive information could be anonymized locally before cloud analysis, and the system could also support local models where needed.

This was critical because trust in the system depended on more than a privacy policy. People needed to understand that the workflow itself respected the sensitivity of the material.

2. Design for expert augmentation, not one-click automation

The system was built to surface findings, structure them, and keep the analyst in control. That meant emphasizing workflows for review, interpretation, prioritization, and collaboration instead of just generating a summary and calling it done.

3. Make collaboration part of the core product

The value was not only in faster analysis. It was in making insights easier to compare, discuss, and build on. The product needed a shared operating surface for AI findings and human interpretation.

4. Build technical flexibility into the product from the start

I designed the system to work across multiple LLM backends and deployment models so the product could adapt to privacy needs, infrastructure constraints, and evolving model quality.

System / Workflow / Experience Design

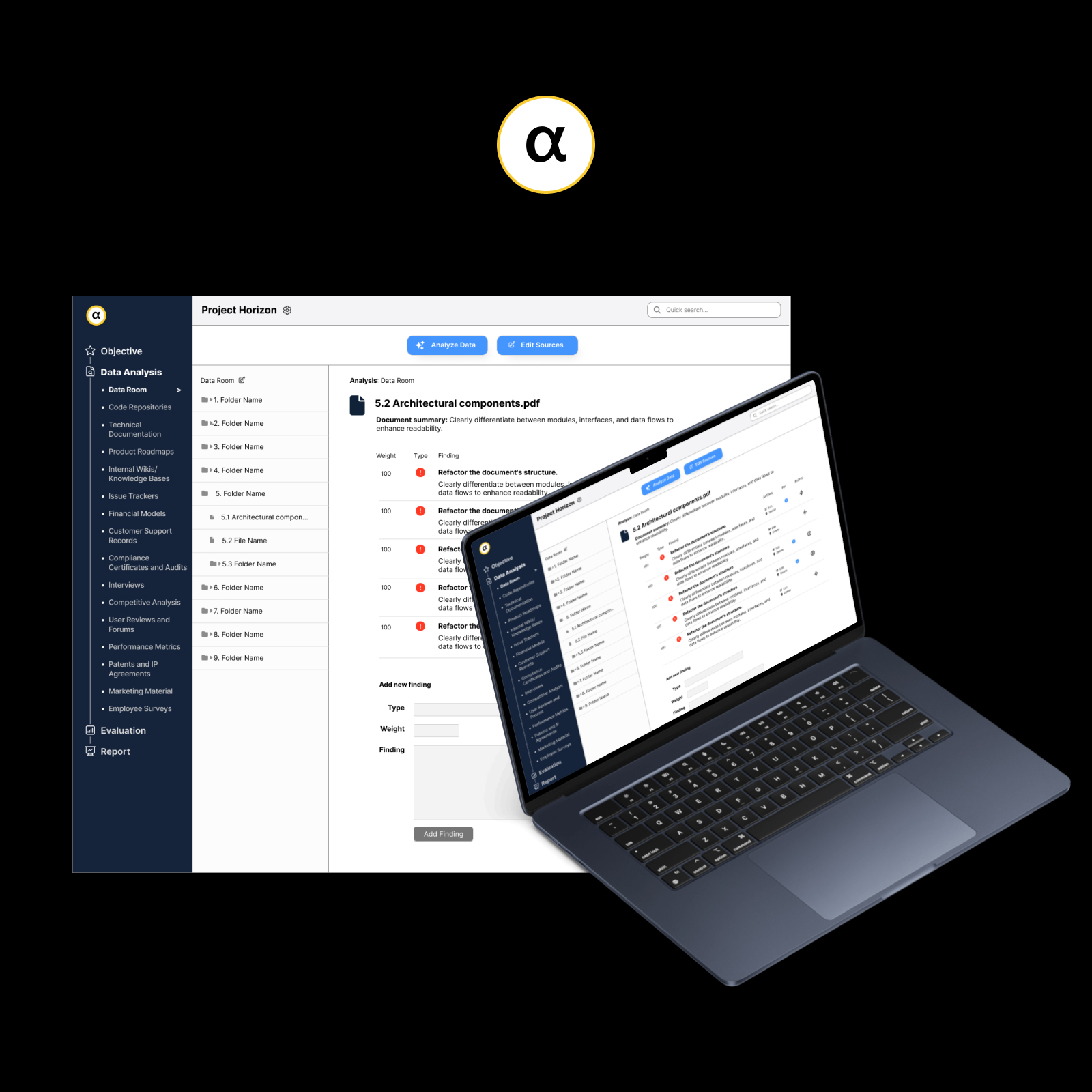

AI-assisted analysis flow

The core flow moved from upload to structured analysis to team review. Documents were chunked, processed, and summarized into consistent outputs that supported comparison rather than dumping raw model text back at the user.

The system was designed to reduce reading time while preserving a clear human decision layer.

- Integrated multiple LLM backends including OpenAI, Gemini, and Ollama

- Built custom prompts for strength and weakness identification

- Implemented chunking and structured parsing for more usable outputs

- Designed agent roles and orchestration patterns across the workflow

Privacy-first architecture

The privacy model was one of the most differentiated parts of the system. I developed an approach that could anonymize or redact sensitive information locally before cloud analysis, while still allowing richer analysis patterns when privacy constraints permitted.

- Local redaction pipeline before cloud analysis

- Support for on-premise deployment with Ollama

- Hierarchical permissions for document access

- Auditability and safer handling of sensitive material

Collaborative review workspace

The interface was designed to unify AI findings and human insight in one place. Teams could review progress, navigate document trees, compare findings, and pin important discoveries without leaving the main workflow.

- Real-time progress tracking via WebSockets

- File-tree navigation for large document sets

- Shared workspace for AI findings and human notes

- Pinning and prioritization for important discoveries

Validation / Rollout

- Deployed with pilot team processing 500+ documents

- Iterated on redaction accuracy based on user feedback

- Enhanced AI prompts for industry-specific analysis

- Added batch processing for large document sets

- Integrated with existing document management systems

The important thing here is that the product was not just a concept. It was tested in real use, improved through feedback, and shaped by operational constraints as much as interface design.

Outcomes

What I Learned

- Privacy is a product feature, not just a compliance requirement. Adoption depended on making trust visible in the workflow.

- AI works best when it strengthens expert judgment instead of trying to erase it.

- Workflow structure matters as much as model quality. Better prompts alone would not have solved the real problem.

- Builder fluency helped move the product faster. Designing and implementing the system together made it easier to test ideas end to end.

- Flexible architecture matters in AI products. Backends, deployment models, and trust requirements change fast.